optimization - How to show that the method of steepest descent does not converge in a finite number of steps? - Mathematics Stack Exchange

Por um escritor misterioso

Descrição

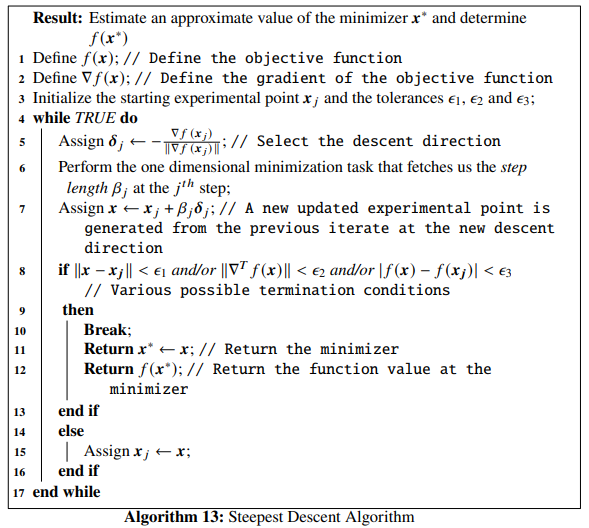

I have a function,

$$f(\mathbf{x})=x_1^2+4x_2^2-4x_1-8x_2,$$

which can also be expressed as

$$f(\mathbf{x})=(x_1-2)^2+4(x_2-1)^2-8.$$

I've deduced the minimizer $\mathbf{x^*}$ as $(2,1)$ with $f^*

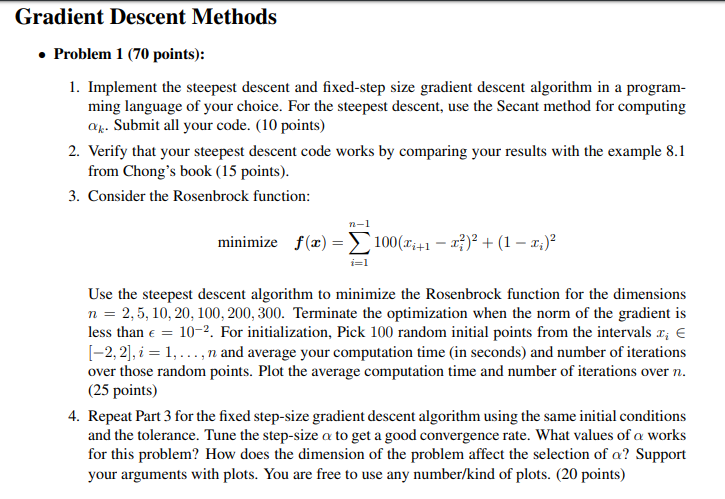

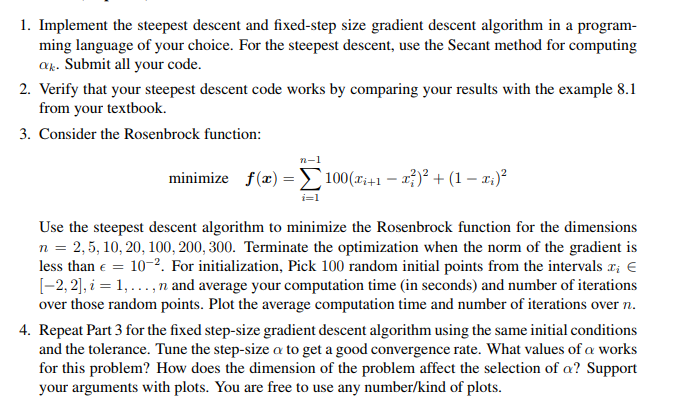

Gradient Descent Methods . Problem 1 (70 points): 1.

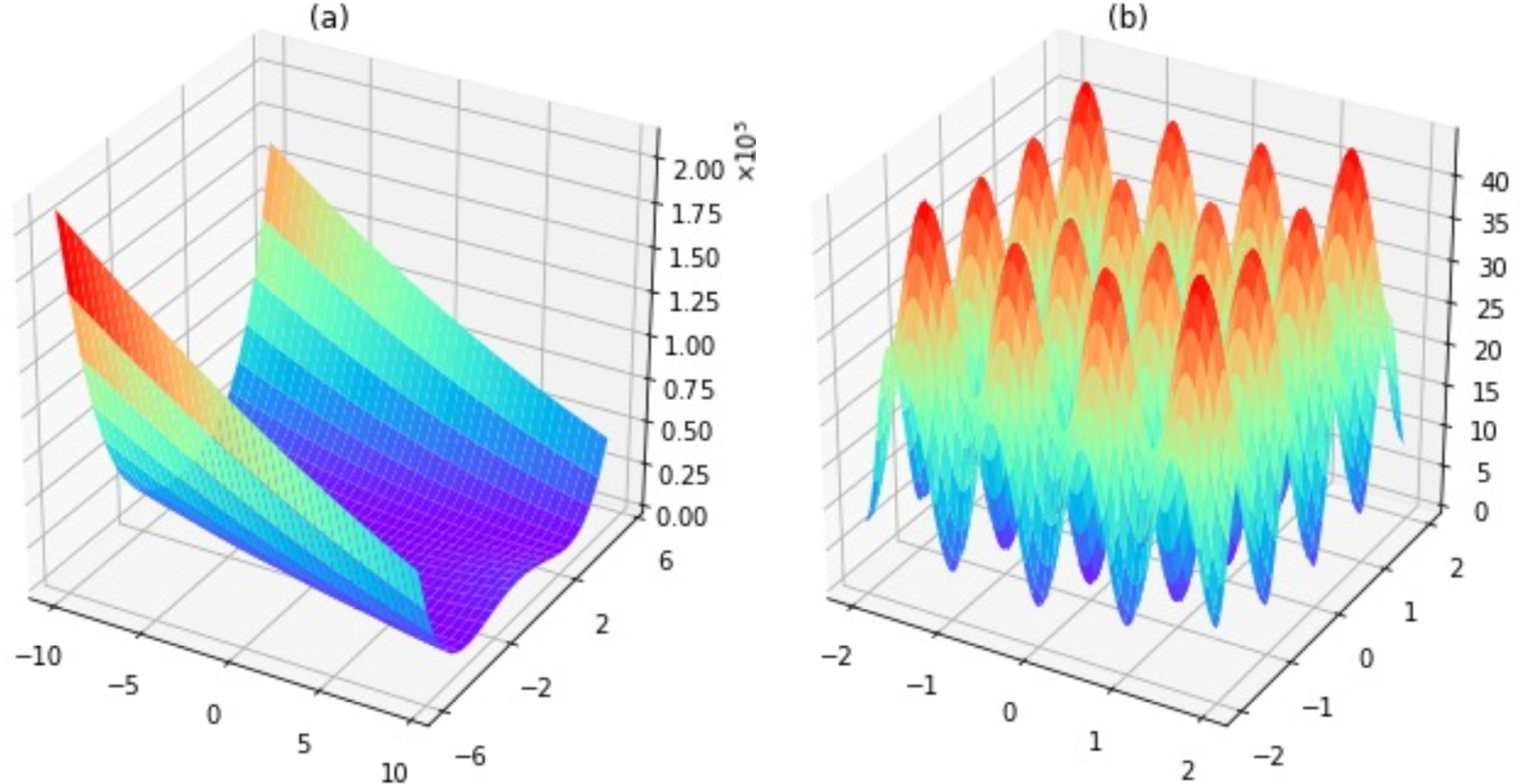

nonlinear optimization - Do we need steepest descent methods, when minimizing quadratic functions? - Mathematics Stack Exchange

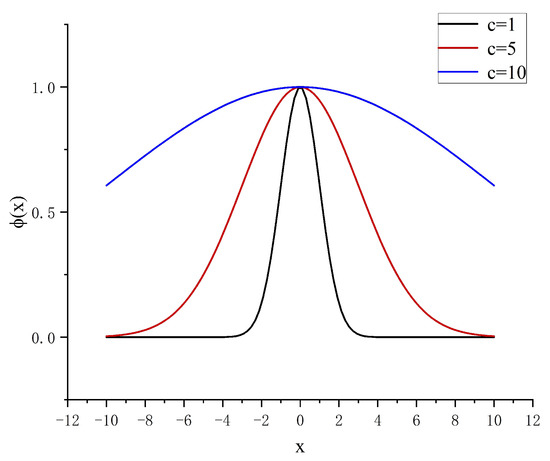

Mathematics, Free Full-Text

convergence divergence - Interpretation of Noise in Function Optimization - Mathematics Stack Exchange

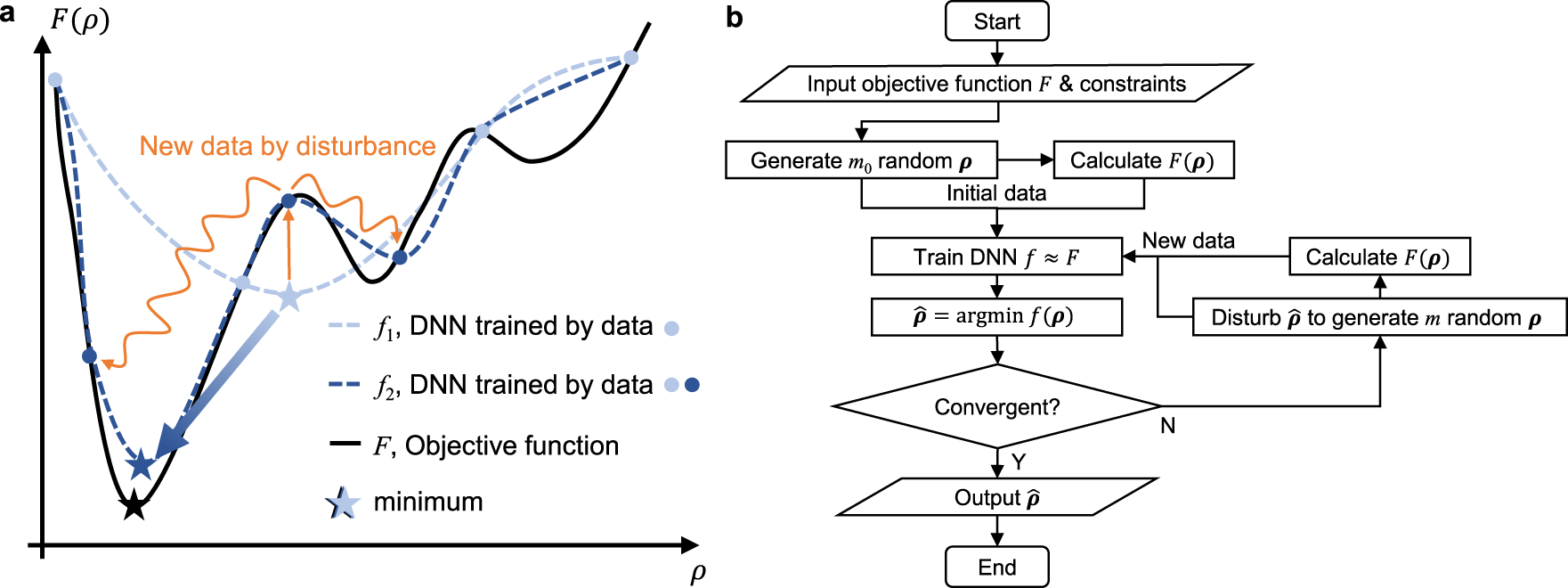

Bayesian optimization with adaptive surrogate models for automated experimental design

Steepest Descent Method - an overview

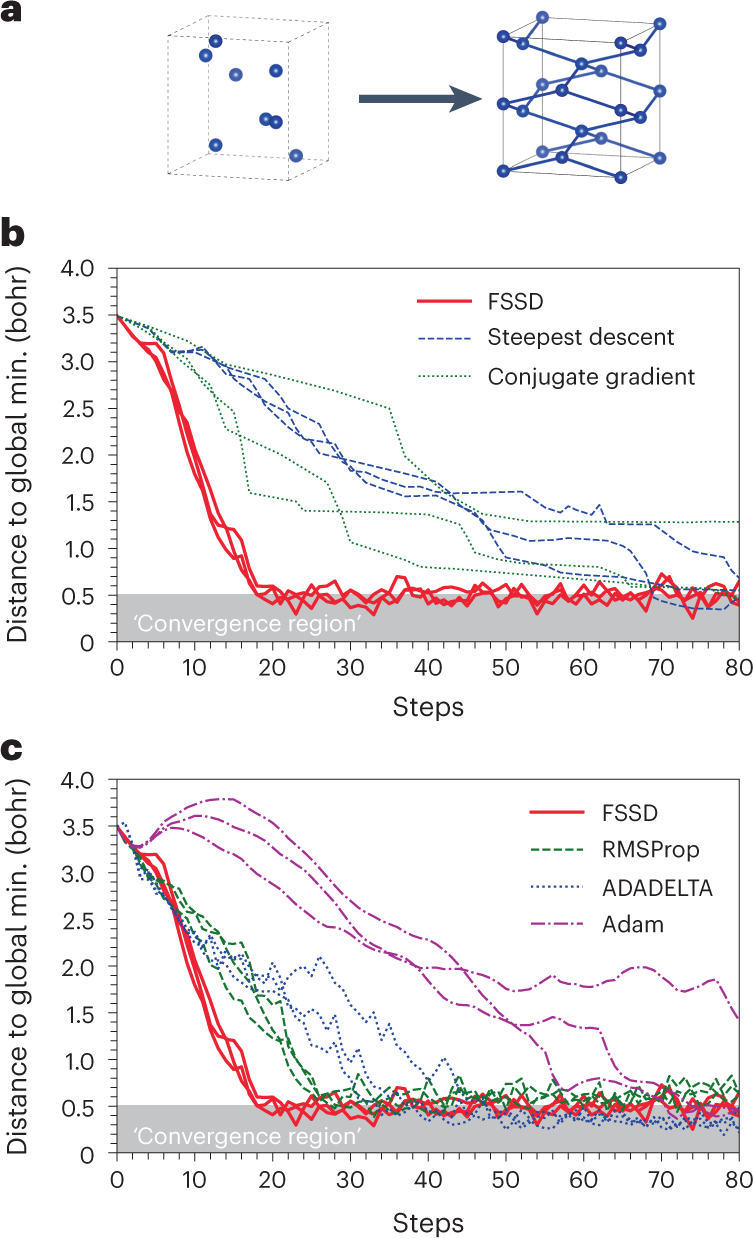

A structural optimization algorithm with stochastic forces and stresses

Self-directed online machine learning for topology optimization

Solved 1. Implement the steepest descent and fixed-step size

Restricted-Variance Molecular Geometry Optimization Based on Gradient-Enhanced Kriging

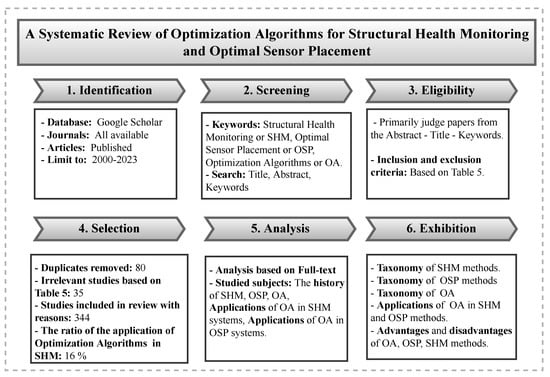

Sensors, Free Full-Text

Illustration of the TOBS with geometry trimming procedure (TOBS-GT)

de

por adulto (o preço varia de acordo com o tamanho do grupo)

:quality(85):upscale()/2019/10/08/921/n/24155406/ee3628885d9cfa579955e8.62538104_.png)